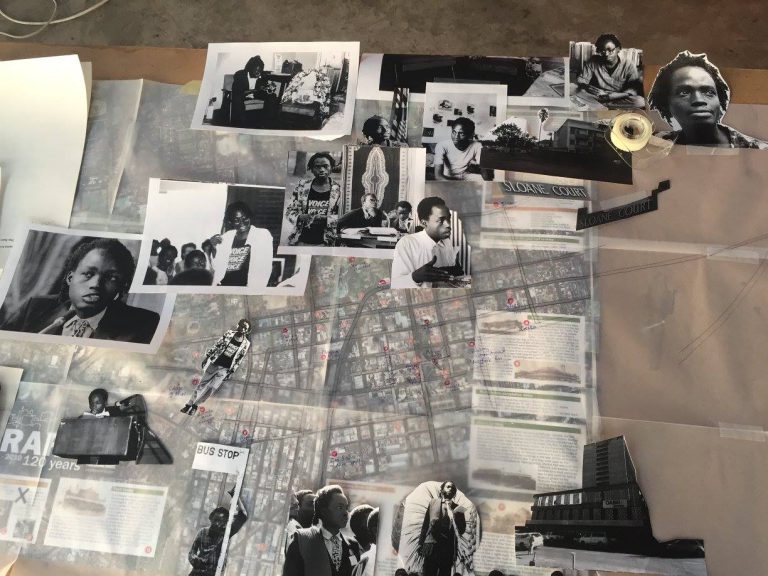

maps the tangible and intangible resources

needed to create AI and machine

learning in order to better understand their

social and political effects. Just as maps

are specific interpretations of space that

are often wrongly seen as objective representations,

she unpacks the misconception

that AI and machine learning are

“bloodless” elements in “purely technical”

systems. Instead, she shows how, whether

deployed by governments or private businesses,

these systems go on to quietly and

dramatically shape the world around us.

By looking at their material and epistemological

origins, she writes, the scars they

leave on the earth and people’s lives becomes

clear. Paying attention to this is the

first step in making better-informed decisions

about these systems which will shape

our futures.

As Hurricane Ida wreaked destruction

across the south-eastern United States, the

Caribbean and Venezuela, we spoke to Kate

over email about the problems with AI and

the misconceptions that surround it.

You’ve been working on the topic of AI and automated systems

for many years. This space is full of pseudoscience. What is one

of the most ridiculous stories that comes to mind?

on these issues. But one thing I’ve noticed is how quickly

things that seemed ridiculous are being applied in ways

that could cause harm. The pandemic has accelerated this

phenomenon. A recent review in the British Medical Journal

looked at over 200 machine-learning algorithms for

diagnosing and predicting outcomes for Covid-19 patients.

Some made grandiose claims and sounded very impressive.

But the study found that none of them were fit for

clinical use – in fact, the authors were concerned that several

might have harmed patients. In other cases, things

that look fun, like FaceApp, can actually be harvesting

images of your face to sell or to train models for facial

recognition. So there’s an increasingly fine line between

silly and seriously problematic.

Where does the popular understanding of AI systems that are

technical systems and therefore somehow objective and neutral

come from? What are its effects, and how can or should these

perceptions be changed to align with the reality?

the early years of AI. Even some of the earliest figures in

AI were concerned about the myth of neutrality and objectivity.

Joseph Weizenbaum, the man who created ELIZA

back in 1964 at MIT was deeply concerned about the

“powerful delusional thinking” that artificial intelligence

could induce — in both experts and the general public.

Even some of the earliest figures in AI were concerned about the myth of neutrality and objectivity.

This phenomenon is now more commonly called “automation

bias”. It’s the tendency for humans to accept decisions

from automated systems more readily than other

humans, on the assumption that they are more objective

or accurate, even when they are shown to be wrong. It’s

been seen in lots of places, including airplane autopilot

systems, intensive care units and nuclear power plants. It

continues to influence how people perceive the outputs

of AI and undermines the whole idea that having a human

in the loop automatically creates forms of accountability

and safety.

How should people be thinking about AI? What perspectives can

help us to move our discussions beyond the technical achievements

of the technology?

and political phenomenon. In Atlas of AI, I look at

how AI is becoming an extractive industry of the 21st

century – from the raw materials taken out of the earth,

to the hidden forms of labour extracted all along the supply

chain, to the data extracted from all of us as data subjects.

Taking this wider political economy approach can

help us see the wider effects of AI beyond the narrow focus

on technical innovation. After all, AI is politics all the

way down. Rather than being inscrutable and alien, these

systems are products of larger social and economic structures

with profound material consequences.

How do you explain companies’ obsession with talking about AI

ethics, developing framework after framework? How do we move

away from this ethics framing?

AI is politics all the way down

for AI ethics in Europe in 2019 alone. These documents

are often presented as products of a “wider consensus”

but come primarily from economically developed

countries, with little representation from Africa, South

or Central America, or Central Asia. What’s more, unlike

medicine or law, AI has no formal professional governance

structure or norms – no agreed-upon definitions and

goals for the field or standard protocols for enforcing ethical

practice. So tech companies rarely suffer any serious

consequences when their ethical principles are violated.

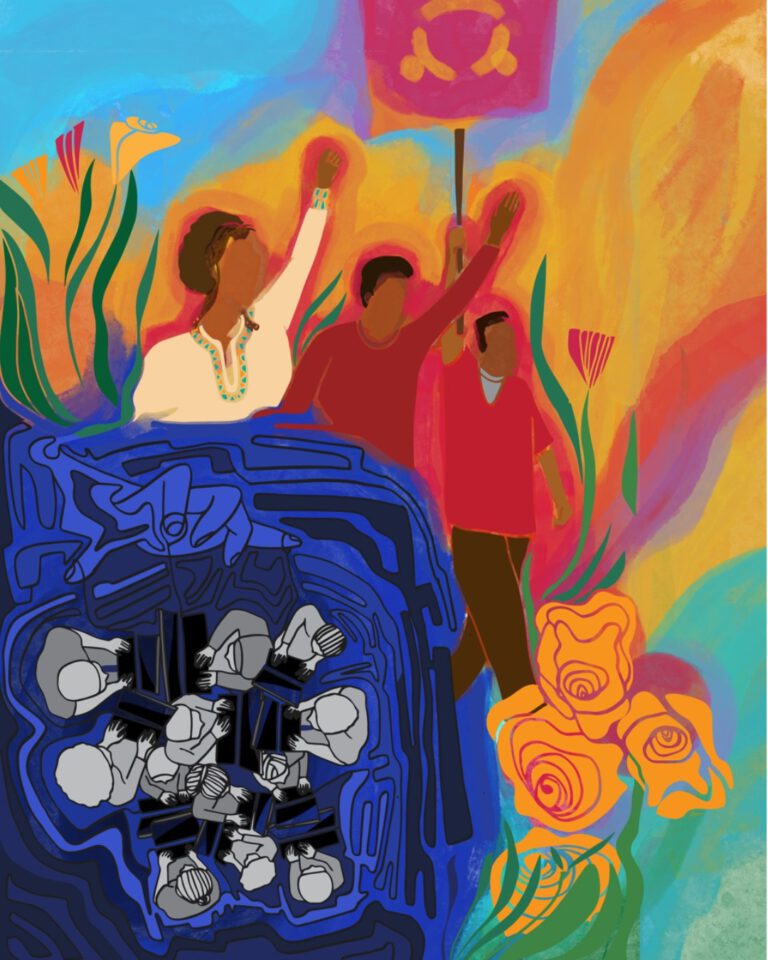

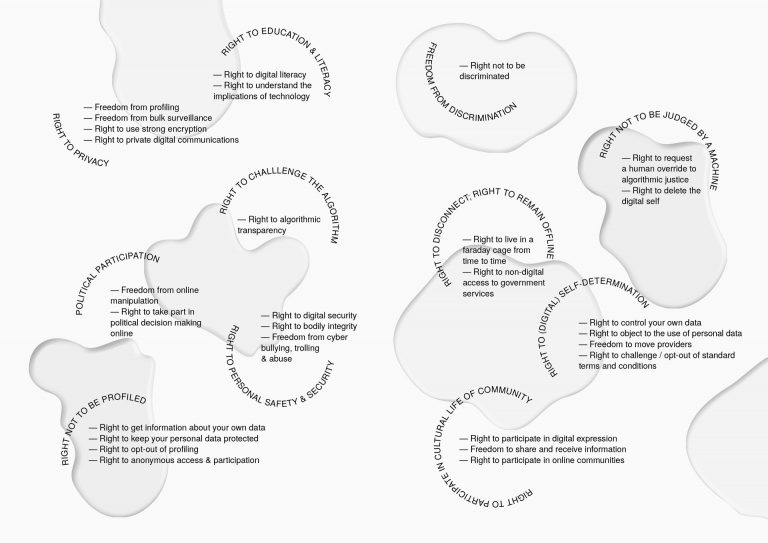

Instead, we should focus more on power, an observation

that political theorists such as Wendy Brown and Achille

Mbembe have been making for many years. AI invariably

amplifies and reproduces the forms of power it has been

deployed to optimize. Countering that requires centring

the interests of the communities most affected, and those

who are left out of the usual conversations in technical

design and policy making. Instead of glorifying company

founders and venture capitalists, we should focus on the

experiences of those who are disempowered, discriminated

against and harmed by AI systems. That can lead to a

very different set of priorities – and the possibility of refusing

AI systems in some domains altogether.

You talk about the components which allow AI to exist as being

embodied and material – essentially showing that they are the

result of different kinds of supply chains being brought together.

Why do you think the connections between these intangible,

digital systems, the material infrastructure that hosts them, and

the people who are affected by them seem so difficult to make?

are intentionally obfuscated. The history of mining,

which I address in the book, has always been left at arms’

length from the cities and communities it has enriched.

The description of AI as fundamentally abstract distances it from the energy, labour and capital needed to produce it, and the many different kinds of mining that enable it.

Supply chains for information capitalism are extremely

hard to research – even for the tech companies that rely

on them. When Intel tried to remove conflict minerals

from its own supply chain, it took over four years and they

had to assess 9,000 suppliers in over 100 countries. I’m

glad you mentioned Thea Riofrancos’s work. I’m also influenced by the work of Martín Arboleda. His book, Planetary

Mine is great on the way the mining industry has

been reorganized into logistical networks and intermingled

with information industries. The philosophers Michael

Hardt and Antonio Negri call this the “dual operation

of abstraction and extraction” in information

capitalism: abstracting away the material conditions of

production while at the same time extracting more information

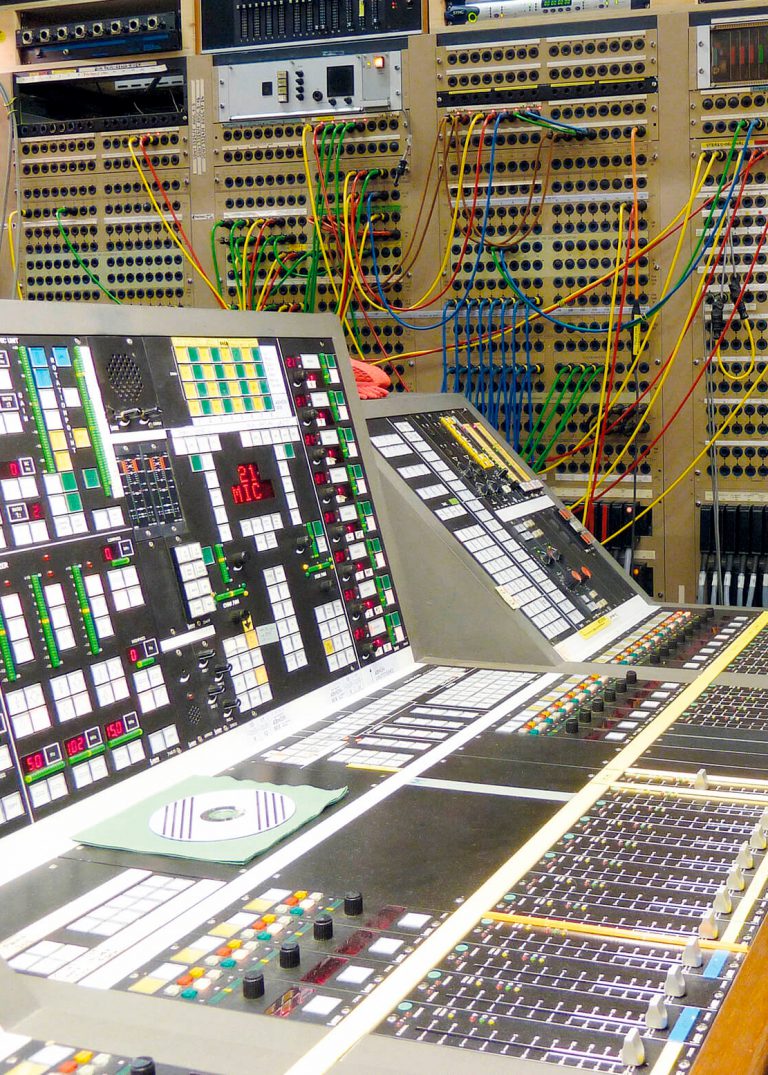

and resources. The description of AI as fundamentally

abstract distances it from the energy, labour and

capital needed to produce it, and the many different kinds

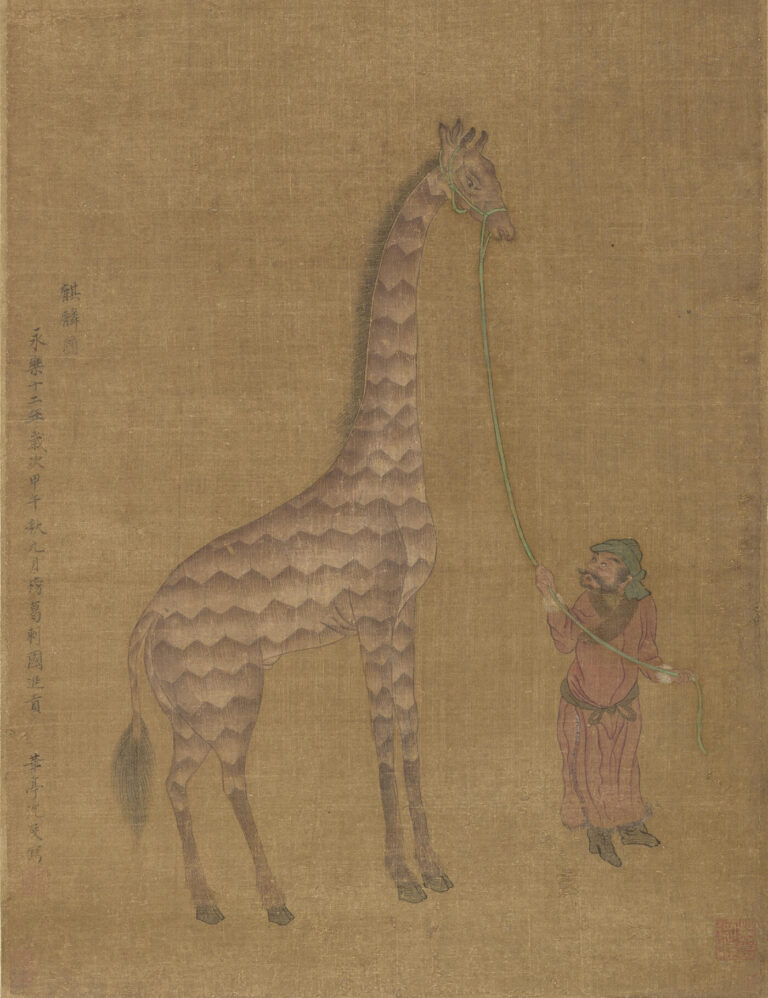

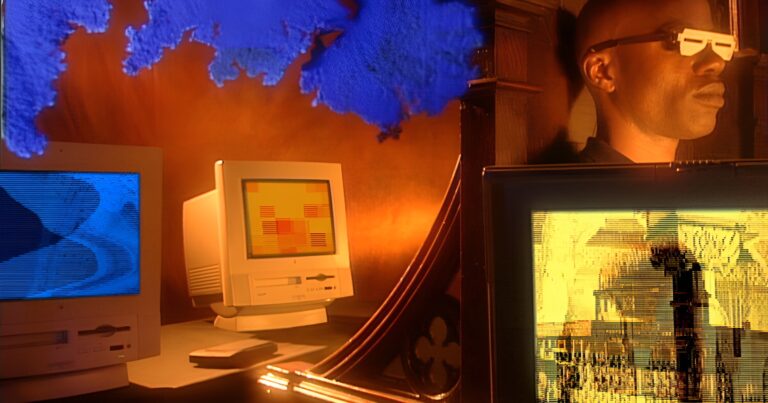

of mining that enable it. So when we see images of AI, in

the press or on an image search, it’ll most often be floating

blue numbers, fluffy clouds, white robots and the like,

which further abstracts the conversation away from AI’s

material and extractive conditions and consequences.

To end on a more positive note! What, to your mind, should come

next?

doing this work. There are so many organizations

connecting issues of justice across climate, labour and

data. That’s incredibly exciting to see. Of course, we are

facing real time pressure now. The IPCC report is just

another reminder of why we can’t stall or make minor

changes around the edges. Understanding the

connections between the computational systems we use

and their planetary costs is part of asking different

questions, and fundamentally remaking our relationship

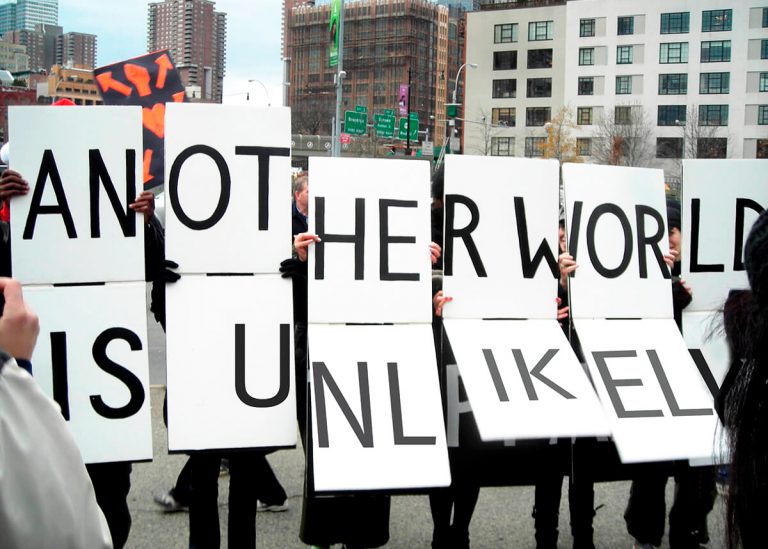

to the Earth and each other. Or as Achille Mbembe puts

it, not only a new imagination of the world, “but an

entirely different mapping of the world, a shift from the

logic of partition to the logic of sharing.”