Mojez: Let’s kick off this conversation with some information about yourself. How did you become a content moderator for ChatGPT and how did you feel about the job?

Mophat: I got into the job through a company called Samasource. I was given some tests, which I passed, and was followed by a two-week training session, after which I was enrolled in content moderation for ChatGPT. I was very excited when I got the the job, but my experiences later on changed everything – even my perception of the job itself.

Mojez: Everyone talks about AI and its potential to enhance productivity, boost performance and improve our everyday lives. While most people believe that AI works magically, only some are aware of the human labour involved in developing these systems. Can you describe your work as a content moderator for ChatGPT, what content and data did you moderate and label?

Mophat: Human labour is fundamental in developing AI systems. This includes tasks like data collection, data annotation, model training, algorithms and content moderation. As a content moderator for ChatGPT, I focussed on ensuring that generated text aligned with community guidelines, moderating content ranging from conversations on everyday topics to sensitive or inappropriate content.

Specifically, I was working on sexual content. This involved labelling and filtering out content that violated platform policies, ensuring topics were safe and respectful. The data I moderated were text inputs addressing the broader topic of sex. I was ensuring compliance with ethical standards and legal regulations.

The idea was that ChatGPT would not generate sexual content when you write something sexual; the tool would self-regulate. But before reaching this point, it has to be trained by human beings. That is what I was doing.

Mojez: How does this work differ from social media content moderation?

Mophat: For social media content moderation, you moderate live videos, pictures and texts made by human beings. Social media cannot self-regulate. But for models like ChatGPT, the aim is that they will. This means that if you type something illegal, it won’t generate that kind of information. For those models to self-regulate or self-moderate, they must be trained by people. Humans have to label those initial texts as hate speech, illegal content, sexual content, so the model can learn to self-regulate. It has to be trained by people.

Mojez: Why is human labour crucial for the functioning of AI systems and what does your work prevent from happening? Do you think human workers like you will always be involved in the AI supply chain?

Mophat: Human labour is essential for AI systems to function effectively as it ensures the accuracy, fairness, and ethical standards of algorithms. My work as a contract moderator was to prevent harmful or inappropriate content from being disseminated. This safeguarded users from exposure to offensive and misleading information. For instance, if you want to generate text about child molestation, this tool will not be able to provide that kind of information. That’s because of our work. While advancements in AI automation are ongoing, human workers will likely continue to play a crucial role in the supply chain, especially if the task requires decision-making. You know, these machines don’t have the capability to make decisions themselves. And these AI systems are not empathetic and don’t give context. Because these machines lack essential human judgement and critical thinking, people will continue to work on them.

Mojez: What challenges did you face as a content moderator for ChatGPT and how have these experiences influenced your advocacy for ethical AI? How would you define ethical AI?

Mophat: One challenge I encountered was the volume and diversity of content I reviewed daily. I could classify more than 700 texts and paragraphs of text per day, many of which were very graphic. Another was being exposed to very sensitive and traumatising sexual content. Just an example, we were working on texts that were touching on necrophilia, child sexual abuse and rape, among other illegal sexual activities. We also had corrupt leaders who didn’t give us access to mental health support, which was crucial.

These experiences have underscored the importance of ethical AI practices and labour practices, prompting me to advocate for transparency, accountability and user safety in AI development. There was no transparency at all, no one was accountable – even for our mental health. That’s why I’m doing all this stuff: to ensure that AI systems support human rights, mitigate biases, and promote the social well-being of people. My view is that AI cannot be ethical if it’s trained immorally, by exploiting people in economically difficult areas, like people in the global south.

Mojez: What in your opinion are the most significant human costs associated with the development and deployment of AI technologies like ChatGPT?

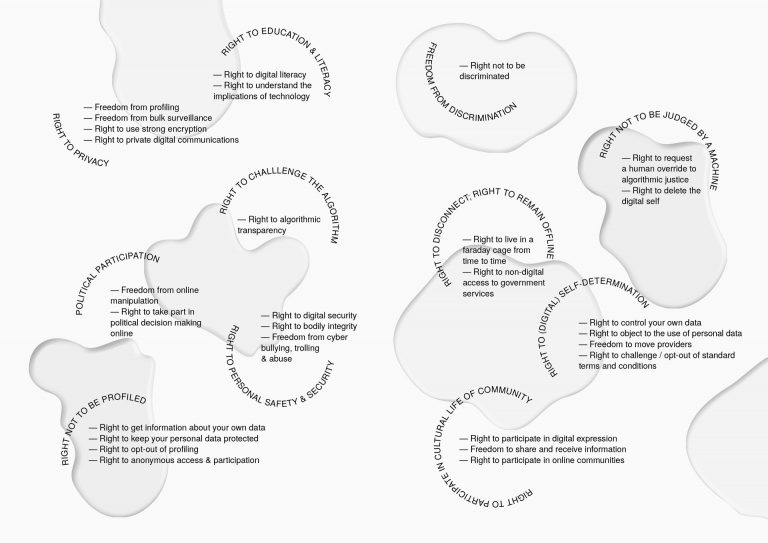

Mophat: In my view, and speaking generally, the most significant human costs include potential job displacements. We’ve had situations where authors and writers complain that their writing is taken without their consent and used to train these machines. Privacy rights are also eroded when someone takes material that you put online and uses it to train language models like ChatGPT without your consent. And then, for the workers who train these machines, there is the psychological impact. If they’re not given access to professional psychiatrists who can provide appropriate mental support, it’s also a risk to them.

We also have concerns about the misuse of AI for surveillance. For example, someone scans your face, and it’s biased. We’ve also have had cases of mistaken identity where these machines provide articles that do not reflect the person. There are many issues of manipulation and control stemming from AI, which lead to threats to individuals’ freedom and to democratic principles.

Mojez: How can AI companies improve their practices to protect both the user and the workers involved in the lifecycle of AI products?

Mophat: AI companies can prioritise user safety, implement robustly coded moderation policies, provide adequate support and resources for workers, and conduct regular audits to identify and address biases and ethical concerns in their AI systems. Additionally, transparency in AI development, data usage and decision-making processes is crucial for building trust with users and workers. There is also a need for AI companies to be transparent regarding their labour supply chain, as this will help increase accountability and reduce the exploitation of workers. Data shows that in the global south, especially in Kenya, people work under very exploitative conditions. If there is transparency in their labour supply chain, this can be reduced.

Mojez: What role do you think legislation should play in mitigating the adverse effects of AI on society, especially on vulnerable populations?

Mophat: Legislation will be critical in regulating AI technologies to mitigate adverse effects, protect vulnerable populations, and ensure accountability and transparency in AI development. This can include establishing clear guidelines for privacy, algorithmic transparency, fairness and accountability, as well as mechanisms for oversight and enforcement to hold AI companies accountable for any harmful impacts on society. For example, in my own case, I and my colleagues worked for ChatGPT, and there were no such guidelines in place. They could get away with it because there was no legislation to oversee them.

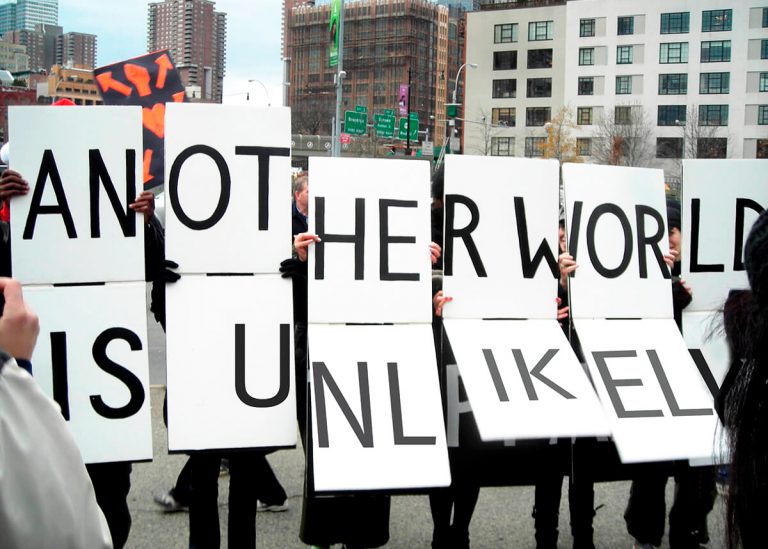

Mojez: It seems that the buzz around AI and the technology behind it will persist for a long time. What are your hopes for the future of AI and humanity?

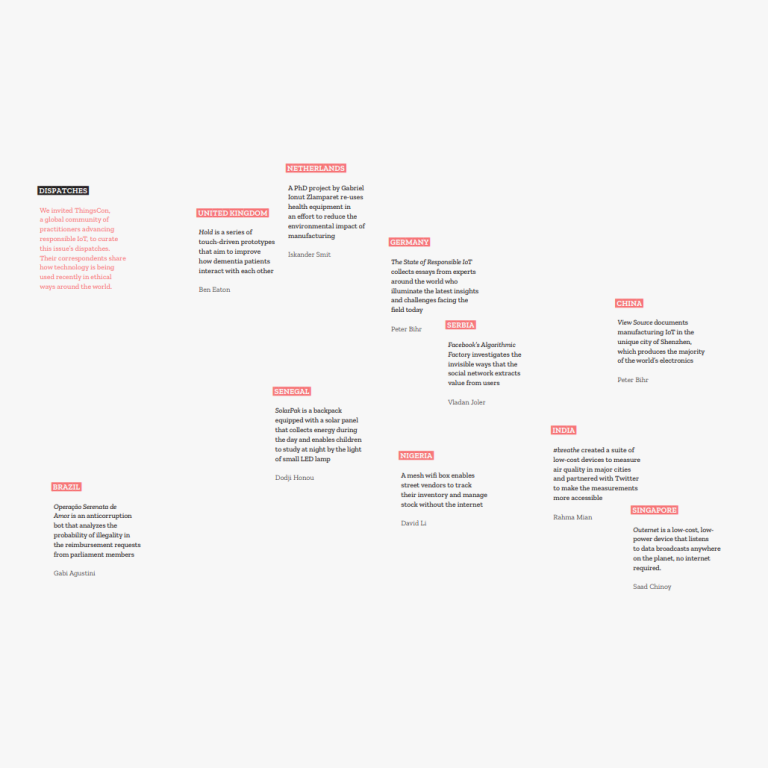

Mophat: Well, my hope for the future of AI is that it will be developed and deployed as ethically and inclusively as possible. When I talk of inclusivity in AI development and deployment, laws that regulate AI have been implemented in the European Union but most of this work is done in Africa. If Africans are not involved in lawmaking, lawmakers will not have a true picture of what needs to be included in their policies. Inclusivity should be compulsory in policy to ensure it benefits all humanity without intensifying inequalities or harming vulnerable populations. If this happens, I envision AI being used to address pressing global challenges, enhance human capabilities, and promote sustainable development while respecting human rights, diversity and dignity.

Mojez: Finally, you have started your own NGO. What is your mission, and how are you trying to effect change?

Mophat: The NGO I started and founded is called Techworker Community Africa, abbreviated as TCA. As TCA, we aim to empower, inform and support tech and data training workers in Africa, ideally in advocating for their rights and career advancement because we are trying to connect them to better-paid data notation jobs. Most data labellers are paid less because there is a middleman called a BPO, which stands for business process outsourcing. When work goes through a BPO, they do some nasty business and the labellers end up getting very low pay. We are trying to bridge that gap so that we can have clients bring work directly to tech workers. To do this we are developing a data annotation platform for clients who don’t want to exploit people. They can bring their work directly to the platform and workers can work directly and indirectly with the client. Our mission is to create a supportive ecosystem that ensures dignity, recognition and improved working conditions for tech workers. Capacity building, advocacy, training and developing careers – that’s what we’re doing, that’s what TCA does.